Project IV 2014-2015

Neural Networks on Steroids

Description

When you use Siri on your iPhone, or let Google find images

similar to one of your dog, or ask Amazon to recommend you

items for purchase, you are likely to be using a revolution

in neural network technology known as "deep

learning".

When you use Siri on your iPhone, or let Google find images

similar to one of your dog, or ask Amazon to recommend you

items for purchase, you are likely to be using a revolution

in neural network technology known as "deep

learning".

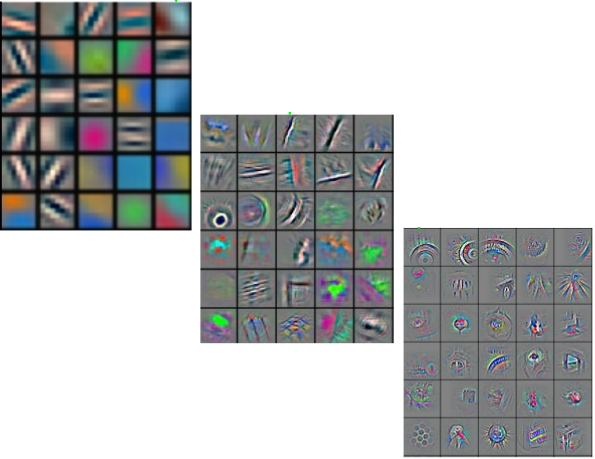

Neural networks used to be the cool thing back in the 1980s. However, thanks to some strong mathematical theorems showing their limitations, they all but disappeared from the radar by the end of that decade. They are back. A number of crucial mathematical insights have changed the scene dramatically during the last 10 years. Startup companies and big multinationals are investing heavily in deep learning these days.

In this project you will learn the mathematical reasons why old-fashioned neural networks perform so badly, and get hands-on experience with the mathematics underlying the new deep learning methods. You will study the mixture of physical and mathematical ideas underlying much of the hype, and also get to see some of the things that still do not work...

Prerequisites

Willingness to play with the maths on a computer is a big plus (but you can use whatever tool you like, be it a full-fledged programming language or a tool such as Matlab or Mathematica). It will make the theory become much more alive.

Some background material

- Reducing the dimensionality of data with neural networks by G.E.Hinton and R.R.Salakhutdinov,

- Brains, sex and machine learning by G.E.Hinton.